metadata

license: llama2

library_name: peft

tags:

- llama-factory

- lora

- generated_from_trainer

base_model: BlackSamorez/Llama-2-70b-AQLM-2Bit-1x16-hf

inference: false

model-index:

- name: llama2_70b_aqlm_toolcall

results: []

datasets:

- vicgalle/alpaca-gpt4

- glaiveai/glaive-function-calling-v2

language:

- en

pipeline_tag: text-generation

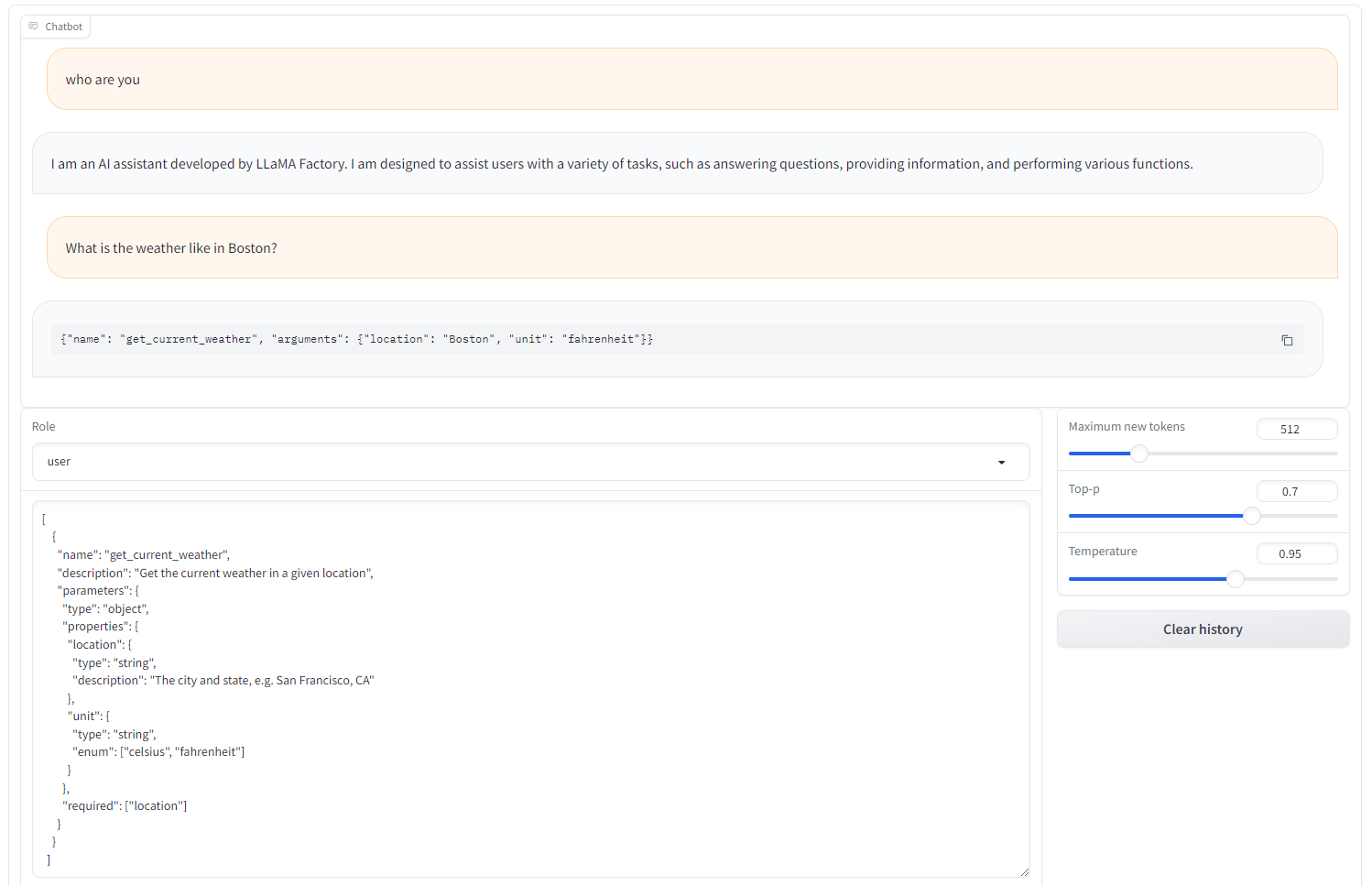

LLaMA-2 70B AQLM 2-bit QLoRA with function calling

This model is a fine-tuned version of BlackSamorez/Llama-2-70b-AQLM-2Bit-1x16-hf using LLaMA Factory.

The maximum GPU usage during training is 24GB, and the model has preliminary conversation and tool-using abilities.

Training and evaluation data

This model is fine-tuned using 1,000 examples of the Alpaca-GPT4 and Glaive-function-calling-v2 datasets, respectively.

Usage

from transformers import AutoModelForCausalLM, AutoTokenizer, TextStreamer

from peft import PeftModel

tokenizer = AutoTokenizer.from_pretrained("hiyouga/Llama-2-70b-AQLM-2Bit-QLoRA-function-calling")

model = AutoModelForCausalLM.from_pretrained("BlackSamorez/Llama-2-70b-AQLM-2Bit-1x16-hf", torch_dtype="auto", device_map="auto")

model = PeftModel.from_pretrained(model, "hiyouga/Llama-2-70b-AQLM-2Bit-QLoRA-function-calling")

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

messages = [

{"role": "user", "content": "Who are you?"}

]

inputs = tokenizer.apply_chat_template(messages, tokenize=True, add_generation_prompt=True, return_tensors="pt")

inputs = inputs.to("cuda")

generate_ids = model.generate(inputs, streamer=streamer)

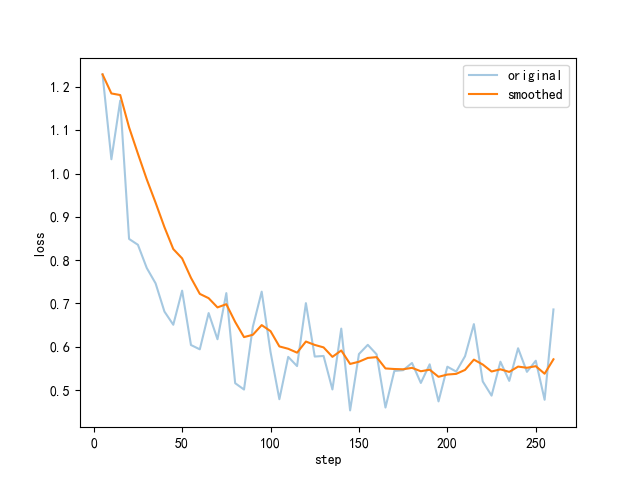

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 1

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 8

- total_train_batch_size: 8

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- num_epochs: 1.0

- mixed_precision_training: Native AMP

Training results

Framework versions

- PEFT 0.9.0

- Transformers 4.39.0.dev0

- Pytorch 2.2.1+cu121

- Datasets 2.15.0

- Tokenizers 0.15.2